RAG reranking explained: better context, better answers

Learn what RAG reranking is, how it works, and why it’s critical for improving relevance, accuracy, and reliability in retrieval-augmented generation systems.

In this article

Have you ever asked an AI assistant a question and got an answer that was slightly off? Not completely wrong, just not what you wanted.

In a Retrieval Augmented Generation (RAG) system, these types of results are not always due to generation issues. Most of the time, it is a retrieval problem.

RAG systems are only as good as the context they get. When the wrong documents are included in the context, the model works with bad material, ultimately producing bad answers.

How can this be fixed? With RAG ranking!

In this article, you will learn that:

- RAG reranking is a post-retrieval step that reorders search results by true relevance.

- Choosing the right reranker depends on factors such as your latency budget, data size, accuracy needs, and cost constraints.

- Reranking is not always necessary. You must know when to skip it.

- Reranking does not exist in isolation. It also behaves differently depending on the RAG type being used.

- Tools such as Meilisearch, Cohere, and Hugging Face each play a different role when implementing reranking in the RAG pipeline.

Let’s get into it!

What is RAG reranking?

RAG reranking is an evaluation layer in a RAG system that reorders the retrieved documents before they reach the language model.

For instance, in the vanilla RAG process, the retrieval can pull, say, the top 20 relevant documents based on their vector similarity. This is good, but may contain noise. Reranking helps to remove that noise by asking a harder question. Which of these documents answers the user's needs?

If the document is not needed, RAG does not rank it as a top priority. This ensures that the context provided to the LLM is the precise information needed to return relevant answers.

Why does RAG reranking matter?

RAG reranking matters because the retrieved results determine the quality of the LLM response. If you feed the LLM weak context, it will hallucinate. But when you feed it highly relevant documents, its answers will be precise and accurate.

Here is a practical example. You have built a customer support RAG system. One of your users asks, "Why is payment failing for my annual subscription?"

Basic retrieval might return 20 documents about payments. This is technically related but not useful here.

The correct answer about annual subscription payment failures might be ranked 14th in the results. But the language model does not see it clearly enough to return it as an answer.

Reranking fixes this. It evaluates all 20 retrieved documents against the query and then pushes the most relevant one to the top. The language model now has the right context it needs.

Language models tend to hallucinate when their context is weak. Because reranking provides the LLM with the necessary context, the LLM hallucinates less. This is useful in building a trusted AI system.

How does RAG reranking work?

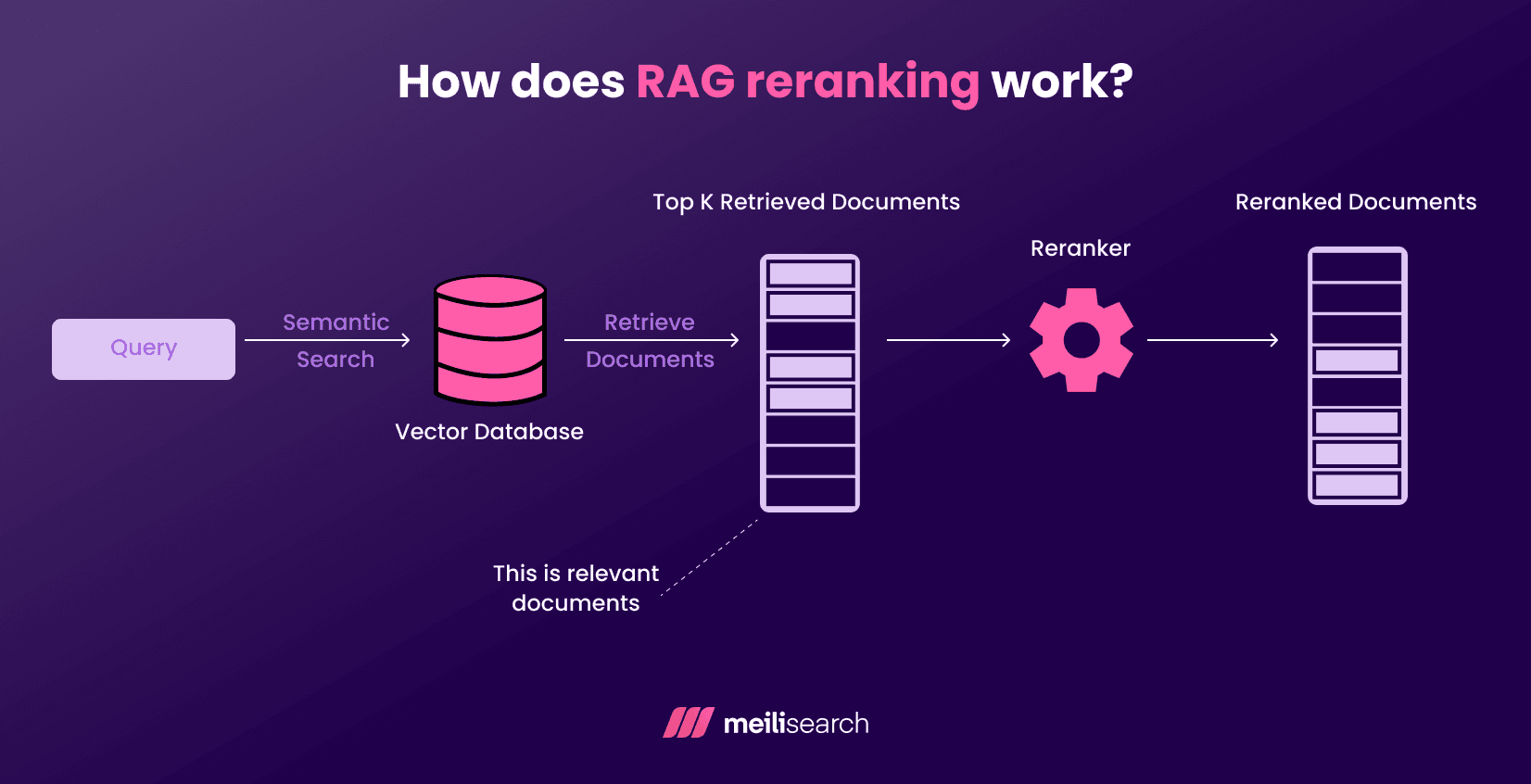

RAG reranking works in two stages.

First, the initial retrieval happens. A vector search returns the top N documents based on similarity to the query vector embedding. N can be any number.

Next, the reranker receives each retrieved document along with the original query.

Under the hood, the reranker does the following:

- Reads both the query and the document together.

- Assigns an independent relevance score to each document.

- Reorders the documents based on their scores.

- Retains only the top-ranked documents. These are the documents that will be passed to the large language model's context window. The others are dropped.

What happens before reranking?

Before the reranking process, the system runs an initial retrieval. At this stage, it may include irrelevant documents.

Here are some RAG techniques that are used in the initial retrieval process.

- Embedding search: The user query is converted into a vector and then compared against the document embeddings in your vector database. Embeddings close to the query vector are considered semantically similar and returned.

- Keyword retrieval: This is the traditional BM25 search that matches the exact terms. It returns documents that contain the search term's keywords. Developers use it for finding exact names.

- Hybrid retrieval: This combines the two approaches. It captures both the semantic meaning and exact matches. Most RAG systems use hybrid retrieval before reranking because it returns better candidate documents than each approach on its own.

What happens during reranking?

During reranking, every candidate document gets evaluated against the original query. The reranker evaluates how well each document answers the question.

A cross-encoder model is mostly used for this evaluation. The model reads the user query and document together as a single input and does the following:

- Scoring: Each query-document pair receives an independent relevance score between 0 and 1. A higher score indicates a stronger relationship between the pair.

- Relevance estimation: The model answers deeper questions about its relevance. Does this document directly answer the question, or only partially? Those with superficial relevance are penalized (their scores are reduced) while those with genuine relevance are rewarded (their scores increase).

- Reordering: The documents are sorted based on their new relevance scores. The highest-scoring documents move to the top of the list.

What types of rerankers exist?

Rerankers come in different forms. Some focus on speed while others focus on accuracy. Your choice should depend on your specific needs:

- Cross-encoder rerankers: The most accurate option. These models read the query and each document together and analyze their relationship. However, evaluating every query-document pair individually is expensive, especially when dealing with large candidate pools.

- Lightweight rerankers: Work best in production environments that require smaller and faster models. They are not as accurate as cross-encoder rerankers, but are better than the basic retrieval methods.

- LLM-based rerankers: Use LLMs as rerankers. They are also accurate but can sometimes be slow and expensive.

- Hybrid rerankers: Combine multiple approaches. Most developers use them because they effectively balance speed and quality in their work.

What are cross-encoder rerankers?

Cross-encoder rerankers (or simply cross-encoders) are reranking models that evaluate query-document pairs jointly as a single input. They read both together and then produce a relevance score based on their combined analysis.

You can use a simple BERT model on Hugging Face that understands text. The model will be set up to output a score based on query-document match.

A major disadvantage of cross-encoders is latency. For instance, if you have 50 documents to check, the cross-encoder must run its analysis 50 times.

The reranking step is an additional step, and having to compute the scoring 50 times can cause significant lag.

What are bi-encoder rerankers?

Bi-encoder rerankers encode the queries and documents separately into vector representations, then compare them using cosine similarity.

The biggest advantage here is efficiency. You can process the entire document just once.

So when a user asks a question, you only need to create a summary for that single query. Then you compute the cosine similarity between the query vector and each document vector.

This is easy for developers to implement. LangChain allows you to set up a bi-encoder system with just a few lines of code. Sentence Transformers (a model from Hugging Face) provides pre-trained encoders.

However, bi-encoder rerankers can miss the subtle context that a cross-encoder would catch. This is because they never see the query and the document simultaneously.

Many production-grade systems use a hybrid approach. They start with a bi-encoder for the initial and broad search. Then, they use a more precise cross-encoder to rerank the top results.

When should LLM rerankers be used?

Consider using a large language model (LLM) as a reranker when achieving accuracy is your main goal.

A good example of an LLM reranker is Llama.

The process is straightforward:

- First, you take the top candidate documents from your initial search and present them to the LLM.

- You then prompt it to rank them based on how well they answer the original query.

- The LLM reads all the documents.

- Finally, it evaluates how well they answer the query and returns an ordered list of results.

In case you are still unsure if it is worth your investment, here are some signals that it’s time to consider an LLM-based reranker:

- Low precision from standard rerankers: Cross-encoders from Hugging Face might surface irrelevant documents in their top results. LLM reranking applies more nuanced reasoning.

- Noisy context: Sometimes, your retrieved documents can contain a mix of relevant and irrelevant content. LLMs are good at cutting through this noise.

- Long documents: LLM rerankers can read long documents in a way that smaller models cannot.

What problems does RAG reranking solve?

RAG reranking addresses the problem of low-quality, low-relevance answers from an AI model.

Here is how.

- Relevance gaps: Reranking uses a cross-encoder model that jointly reads the query and each retrieved document. This catches relevance that pure similarity scoring in vector search may miss.

- AI hallucinations: When the retrieved context is off-target, the AI model tries to fill in the blanks. Reranking reduces the chances of having irrelevant context and, in turn, ensures you get more grounded answers.

- Context dilution: Many LLMs have a limited context window. If irrelevant documents eat into that space, operating the LLM costs more, and it still returns inaccurate answers. Reranking filters the noise so the model gets exactly what it needs as context.

- Poor top-k retrieval: The best document is not always ranked first; it sometimes ranks in the bottom positions. Reranking reshuffles that order so the most useful result is on top.

Now, let's take a look at some limitations of RAG reranking.

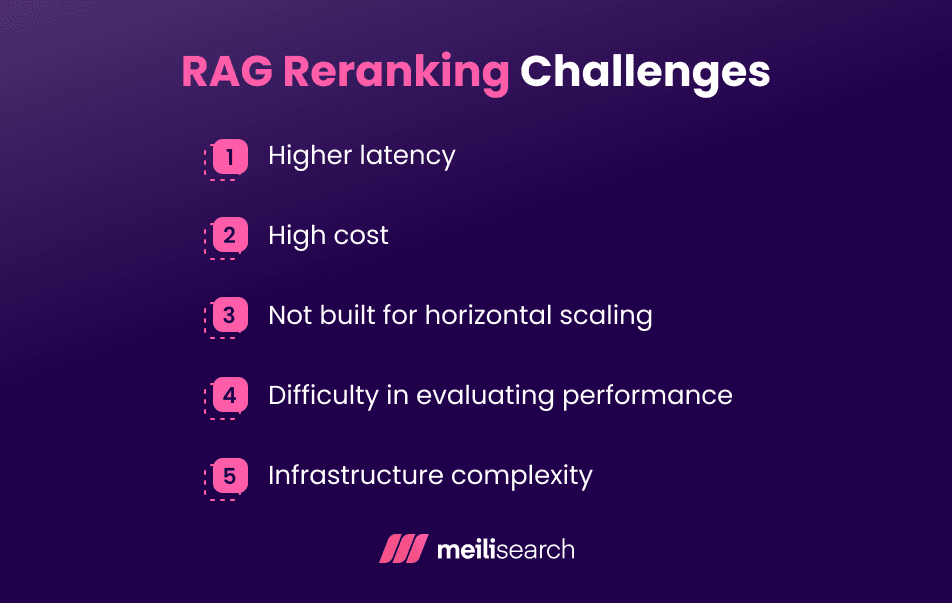

What are common RAG reranking challenges?

RAG reranking challenges include latency, cost, and evaluation uncertainty. Let’s discuss them in more detail.

- Latency: Cross-encoder models score each document individually against the query. That can sometimes be slow, especially at a large scale.

- Cost: Running a powerful reranker requires substantial computational power per query.

- Scaling: What works cleanly in a prototype can start malfunctioning when a real load is applied. Rerankers are not built for horizontal scaling, so engineering teams have to solve that problem themselves.

- Evaluation: Measuring reranking quality requires careful offline testing, and most teams lack the infrastructure to support it.

- Infrastructure complexity: If you have a RAG reranker, you are running three components separately: a retrieval model, a reranking model, and an LLM. Each one can fail independently, making such systems more complex to manage.

How do you evaluate the quality of RAG reranking?

The quality of RAG reranking is measured by asking one key question: Are the right documents ranking higher than before?

There are two levels to evaluating reranking quality: retrieval-level metrics and downstream answer quality.

- Retrieval-level metrics evaluate the ranked list directly. These metrics include:

- Precision@k: Of the top k results returned, how many are relevant?

- Recall@k: Out of all relevant documents that exist, how many made it into the top k?

- MRR (Mean Reciprocal Rank): Where does the first relevant document appear in the results? The higher it sits, the better the RAG reranker's score.

- Downstream answer quality is evaluated by feeding the reranked context into your LLM and then reviewing the output. Are the answers more accurate? Are there fewer hallucinations? The answers to these questions tell you the condition of your reranker.

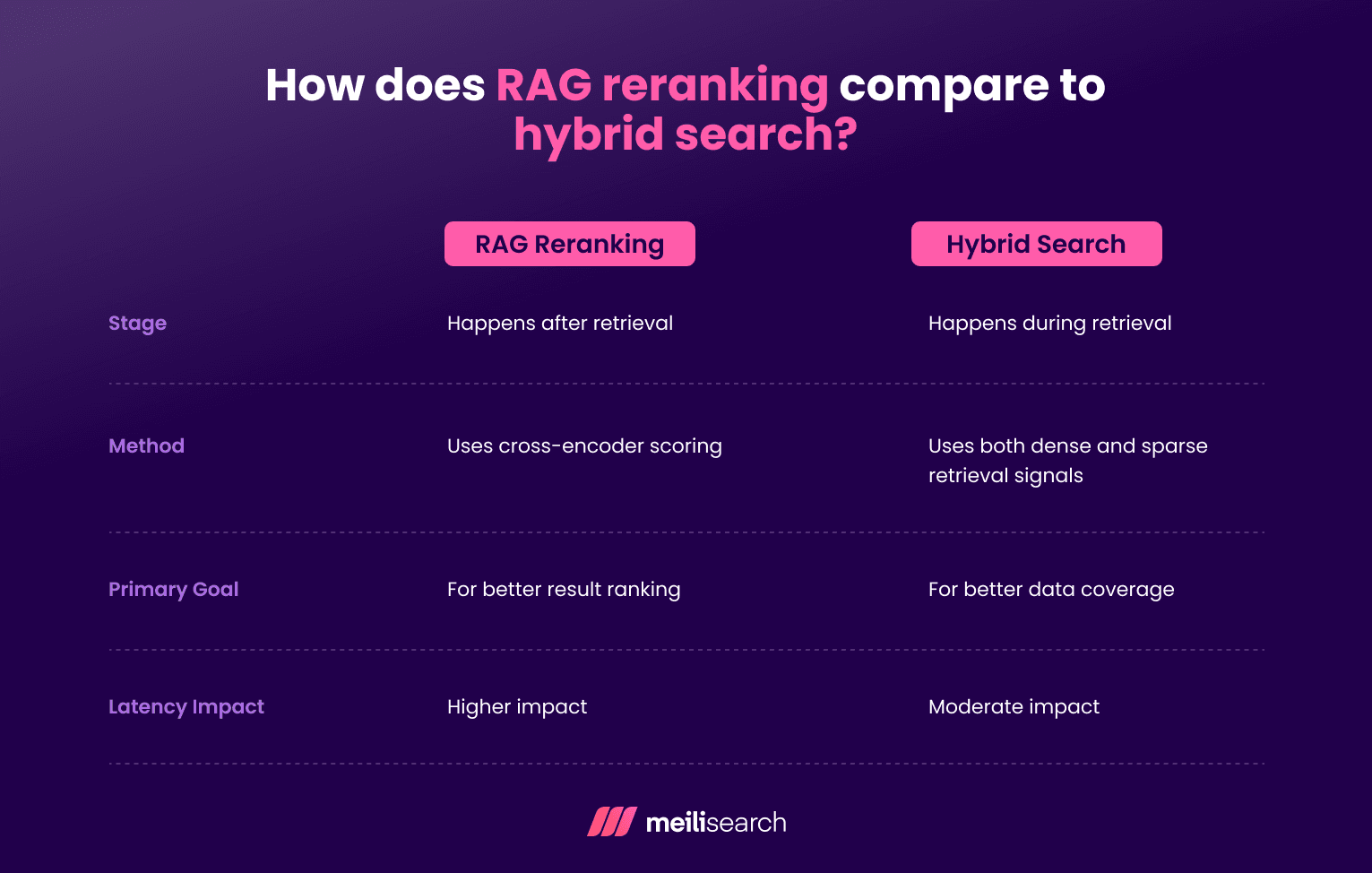

How does RAG reranking compare to hybrid search?

The main difference between reranking and hybrid search lies in their roles in the RAG pipeline.

Hybrid search affects how the documents are retrieved. Reranking changes how the retrieved documents are ordered. Here is a more detailed overview of their differences:

- Stage: Hybrid search runs first, combining dense vector search and sparse keyword search. Reranking runs after the retrieval. It sorts the retrieved documents by true relevance.

- Method: Hybrid search fuses two retrieval signals, while reranking reads the query with each document together.

- Primary goal: Hybrid search solves coverage. It ensures the right documents are in the retrieved set. Reranking solves the ordering problem, ensuring the best documents rise to the top.

- Latency impact: Hybrid search has a moderate impact on latency. Reranking has a greater effect because each candidate document is scored individually.

How do you choose a RAG reranker?

There is no single, universally right RAG reranker, so choose the one that best fits your needs.

Here are some of the factors to consider:

- Data size: A small company can afford to use heavier cross-encoder models because its data pool is small. Larger companies need something leaner, otherwise scoring thousands of documents per query will quickly become a bottleneck. A good choice for them is a bi-encoder or a lightweight cross-encoder.

- Latency budget: If your application needs responses in under a second, then go for a large reranking model. Always know your ceiling before you pick a model. Using a cross-encoder built on MiniLM is a good starting point, especially when speed is a priority.

- Cost: API-based rerankers (such as Cohere) are fast to deploy but can become expensive as you scale up. Self-hosted models carry infrastructure overhead instead. Neither is free, but their prices differ. Choose the one that works best for you.

What tools support RAG reranking?

RAG reranking can be found in vector databases, search engines, ML frameworks, and managed platforms.

Here are some tools that support RAG reranking:

- Vector databases: Pinecone and Weaviate both support reranking either internally or by plugging directly into external reranking models.

- Search engines: Meilisearch supports reranking via its built-in plugins. This makes it a useful tool for teams already planning search functionalities.

- Machine learning frameworks: Hugging Face offers various cross-encoder models for use in a RAG pipeline. Sentence Transformers is the go-to library for building and fine-tuning rerankers.

- Managed platforms: Cohere Rerank and Google Vertex AI offer reranking as a straight API call. You do not have to manage any infrastructure.

How does Meilisearch support RAG reranking?

Meilisearch handles search and relevance quite well. It is well-suited to support the retrieval step within a RAG pipeline and is designed to deliver highly relevant results quickly.

Meilisearch has a sophisticated, built-in ranking system. It automatically sorts the results by where the query appears in the documents and how closely it matches. This ensures that the initial set of retrieved documents is of high quality.

It also offers hybrid search capabilities, so you get the precision of keywords and the contextual understanding of vectors.

Additionally, Meilisearch has a powerful filtering system that lets you narrow the search space before the retrieval process begins. If there is less noise going in, less reranking work is needed.

Meilisearch is ideal if your team wants both a fast setup and a clean integration with an LLM layer.

When is reranking unnecessary in RAG?

There are situations where the added latency and cost of reranking are not worth it.

Here are some scenarios where reranking may not be needed:

- Simple datasets: When the documents are clean, using vector similarity alone is enough. There is not enough noise in the retrieval pool to justify a second scoring pass.

- Short documents: Reranking is useful when documents are long, as relevant information can be buried within them. Short documents are easier for vector embeddings to represent accurately.

- Low-stakes use cases: There are environments where a slightly imperfect result has no real consequences, such as internal knowledge bases or searches of developer documentation. The tolerance for imperfection is high enough that applying reranking becomes an expensive solution to a cheap problem.

- Latency-sensitive applications: If your system needs to respond quickly and retrieval quality is already acceptable, you do not need a reranker.

What are the best practices for RAG reranking?

Here are some best practices you can apply when building a RAG reranking system:

- Retrieve more than you think you will need: A reranker can only work with what the retrieval gives it. More candidates going in means better results coming out.

- Choose the reranker based on your use case: If accuracy is essential for your users, then go with a cross-encoder. If speed is more important, then go for a lighter model such as MiniLM.

- Build evaluation before you scale: Do not wait until the system is live to know whether reranking can help you. Build a labeled test set early and measure the precision@k and MRR before and after reranking.

- Treat it as a pipeline, not a separate feature: Retrieval, reranking, and generation interact with one another. So tune them together.

- Iterate on candidate size and cutoff thresholds: There is no magic number for how many candidates to retrieve or rerank. The right answer depends on your data. The best way to find what works is to test different configurations.

- Know the RAG type you will use: Understanding reranking also means understanding how it works with different RAG architectures. Modular RAG systems can slot reranking in as a swappable component. In speculative RAG, reranking plays a more decisive role.

What RAG reranking means for building better RAG systems

Retrieval in RAG pipelines is the starting point. Reranking is what turns a RAG system that finds any documents into one that finds the right documents. That is what makes apps like ChatGPT stand out.

Finally, the quality of what goes into the context window determines the quality of what comes out. Get that right, and the rest of the RAG pipeline will be good.