How RAG for customer support improves accuracy at scale

Discover how RAG for customer support improves accuracy, reduces hallucinations, and powers scalable AI support systems.

In this article

Consider this scenario: one of your customers asks a customer support bot about a newly purchased product. Instead of being helpful, it returns a three-paragraph answer about something completely unrelated.

The customer is confused, still unsure of their answer, and probably also losing trust in your business.

That is what happens when AI generates answers just from its training data rather than also retrieving relevant information from external knowledge sources.

That is why using a RAG-based AI system for customer support is recommended.

In this article, you will learn that:

- Retrieval-augmented generation (RAG) for customer support is crucial because it grounds every AI response in an external knowledge base.

- A RAG pipeline moves through five stages: ingestion, indexing, retrieval, augmentation, and generation.

- RAG for customer support improves support accuracy and reduces ticket volume.

- Agentic RAG works through a problem step by step until it gets a complete answer.

And we’ll show you how to determine if your organization is ready for RAG and, if so, how to build it.

Let’s break down how RAG works in customer support and what it takes to implement it properly.

What is RAG in customer support?

RAG in customer support is a framework that gives AI systems access to accurate, up-to-date information before generating a response. Answers to customer queries come from an external knowledge base rather than from the model’s training data alone.

For instance, when a customer asks a question, a RAG system first retrieves the most relevant documents. It then adds the documents to the initial question and generates an answer based on its findings.

This process eliminates problems with outdated answers or outright hallucinations.

How does RAG improve customer support accuracy?

RAG improves customer support accuracy by reducing the model's reliance on memory. A generative AI model that returns its answers solely from memory risks returning outdated information.

With RAG, the model uses data from integrated knowledge bases to answer the question. Here is what changes specifically:

- Grounded responses: RAG draws directly from documentation and internal knowledge bases before generating any responses. This means the answer comes from a source you can control.

- Fewer hallucinations: Hallucinations occur when a model generates answers without information retrieval. RAG fixes this by ensuring it first retrieves any relevant information from the knowledge base before generating an answer.

- Consistent and relevant answers: Without RAG, two customers can ask the same question in slightly different ways and receive very different answers. But with RAG, the retrieved information is always anchored to the same source. This ensures consistency in responses.

Why use RAG for support automation?

Using RAG for support automation improves the overall customer experience. That is critical for any business.

RAG chatbots can use artificial intelligence to instantly generate answers from your help articles, so your customers quickly get solutions to their problems.

Also, RAG systems ultimately help reduce ticket volume. Your human agents do not need to be present to address FAQs. The AI system handles that. They can focus on more complicated questions that require a human touch.

Another advantage is scalability. Because RAG works with your datasets, the AI assistant gets smarter as you add more help articles. Whether it receives one support ticket or a thousand, the AI assistant can handle all without breaking a sweat.

You can keep your customers happy without significantly increasing resources.

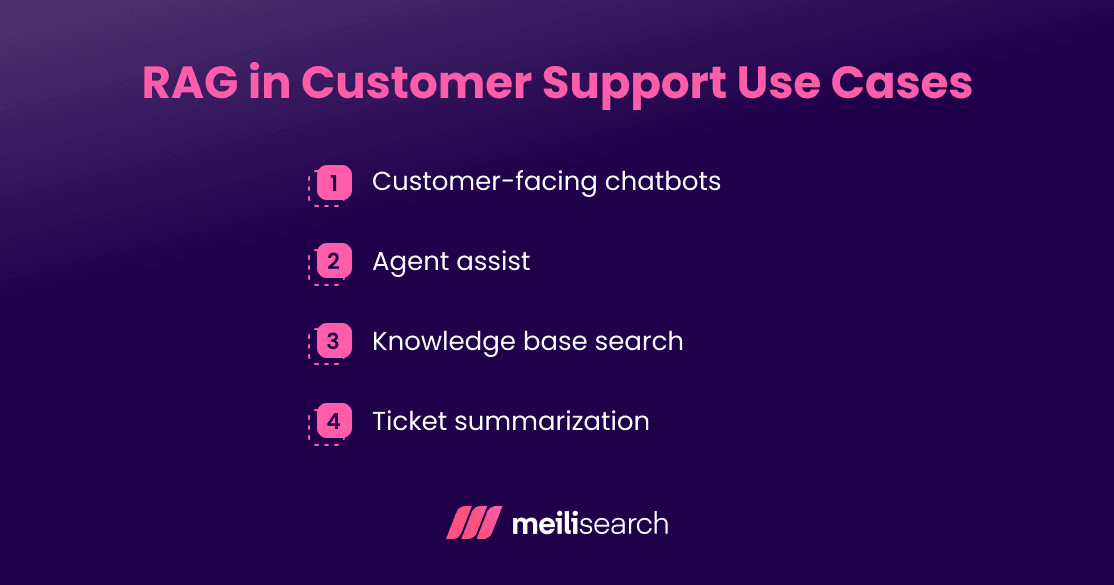

What are RAG use cases in customer support?

The use cases of RAG in the customer support workflow vary. Let’s see some of the most important ones:

- Customer-facing chatbots: A customer asks a question; the RAG system retrieves relevant information from a knowledge base and generates a direct answer.

- Agent assist: Human agents do not always have all the answers, especially for complex products. They might not be able to find the document that contains the answer. RAG helps agents get the information they need, making them more efficient.

- Knowledge base search: A traditional keyword search breaks down the customer's query word by word. But RAG tries to understand the intent behind a query. This means that relevant articles can be found even when the phrasing does not match any keyword within that article.

- Ticket summarization: In large companies, long support tickets are common, and they can carry a lot of noise. RAG can extract what really matters in a summarized text. Before a human opens the message, they already have a good understanding of the ticket.

How does a RAG pipeline work in customer service?

Here is how a RAG pipeline turns a customer request into an accurate answer.

- Ingestion: Supporting content is loaded into the RAG system. These are the data sources used to augment the AI responses, for example, your policy documents or product guides.

- Indexing: The ingested data gets converted into vector embeddings and stored in a vector database.

- Querying: A user poses a question to the customer support system.

- Retrieval: The RAG system searches the database it has access to and returns the most relevant documents based on the query's meaning.

- Augmentation: The retrieved documents are combined with the original user question into a single prompt. The AI model now has relevant context to work with.

- Generation: The large language model (LLM) reads the augmented prompt and produces a grounded response in real time. That is the text the customer sees.

Note that the relevance of the answer and, consequently, customer satisfaction will depend on how effectively each step is carried out.

Let’s zoom in on the key steps.

How is support data indexed?

Support data is indexed by transforming it into a format that AI models can understand.

First, the documents are broken down into smaller ‘chunks.’ Each chunk is then converted into a numerical representation called an embedding. These embeddings are stored in a specialized vector database and can be retrieved during semantic search.

Meilisearch excels at performing fast semantic search on this vectorized data. It instantly finds the chunks that are contextually similar to a user's query.

How does retrieval select relevant context?

Retrieval selects context by finding the documents that are closest in meaning to the query. This process is called semantic search.

For example, imagine a customer searching for ‘a quiet place to stay near Barcelona.’ A simple keyword search might show only the closest hotels, regardless of noise levels.

Semantic search, however, understands the user's intent as ‘tranquility.’ It then analyzes hotel reviews and descriptions for concepts related to ‘peace and tranquility’ before recommending.

How does generation produce final answers?

Generation produces the final answer by giving the LLM a prompt containing relevant information.

This process begins with prompt construction. The system creates a detailed set of instructions for the LLM. After this, both the user's query and the retrieved document chunks are injected into the prompt. This is known as context injection.

The LLM then reads the constructed prompt and generates a context-aware answer. If this is done right, there will be few or no hallucinations.

Generation is the final output your customer sees.

Which AI is best for customer support?

The right AI system for customer support is the one that meets your specific requirements. Here are some criteria to consider when choosing:

- LLM quality: The model needs to handle poorly phrased customer questions and still produce accurate answers. Test to confirm that the LLM you choose performs well for your use case. Do not rely on benchmark scores alone. A model can top every score and still struggle with the questions that your customers ask.

- Integration flexibility: When selecting an AI model, ensure it seamlessly integrates with your tools. It needs to be able to connect to your knowledge base and communication channels.

- Cost: Most API-based models charge per token. As you scale up, the costs can add up faster than expected. Always factor in retrieval and generation costs together before starting.

- Security: Customer data is sensitive data. The AI handling it needs to meet your security or compliance requirements. In enterprise support, for instance, confirm that the system is compliant with data residency rules.

When should companies implement RAG?

Companies should implement RAG to optimize their customer experiences with AI. The right time is when the cost of not having it exceeds the cost of building it.

That time varies from company to company. But here are signs to determine how ready you are:

- Data maturity: RAG is only as good as the knowledge base behind it. It works best when it has reliable data sources to draw from. So if your data is not available, you are simply not ready for RAG. If it is messy, you need to clean it first before implementing RAG.

- Ticket volume: When repetitive questions consume a good amount of your support team's time, that is a sign. If these queries can be answered using existing documents, RAG can provide automated responses with a human feel.

- Automation goals: If you have a clear business goal, you might use RAG to achieve it. But you have to be specific in what you want to use RAG for.

- Technical capacity: RAG requires engineering investment. Vector databases. Retrieval pipelines. LLM integration. None of that runs itself. You need technical expertise to set up and maintain the system.

Frequently Asked Questions (FAQs)

What are RAG pipeline components?

A RAG pipeline has five core components:

- The embedding model that converts text into vectors.

- The vector store that holds those vectors for fast search.

- The retriever that finds the most relevant chunks at query time.

- The generator (or the LLM) that produces the final response.

- The orchestration layer that ties it all together and manages the flow between steps.

What is agentic RAG for support?

Agentic RAG is a type of RAG that can reason through a problem and take multiple steps to achieve a goal.

Basic RAG retrieves once and generates once. Agentic RAG performs multiple actions until it has a sufficiently good answer.

What’s the difference between RAG and fine-tuning?

The main difference between RAG and fine-tuning is how the AI model learns and uses information.

RAG retrieves information at query time, keeping knowledge fresh without retraining. Fine-tuning permanently teaches a model new knowledge by retraining it on a static dataset.

RAG is cheaper to maintain and easier to update. On the other hand, fine-tuning gives you more control over how the model behaves. The trade-off is that keeping it current is expensive.

Why RAG for customer support is becoming essential

Providing immediate, accurate answers in customer support is now the baseline for businesses.

RAG for customer support is what makes that baseline achievable at scale. It provides teams with a system that gets better as its knowledge base improves.

How Meilisearch simplifies retrieval and accelerates RAG implementation

Getting retrieval right is one of the harder parts of building a RAG support system. Meilisearch’s hybrid search features mean that the right documents surface faster and more cleanly. When less noise reaches the LLM, customers will get better answers.