How to build and optimize RAG in AI for reliable answers

Learn how RAG in AI works in practice, how to improve retrieval relevance, evaluate quality, secure data, and keep results up to date in production.

In this article

Retrieval-augmented generation (RAG) in AI is an architecture that combines information retrieval with text generation.

Instead of relying solely on a model’s training data, a RAG AI system retrieves relevant information from external data sources and uses it to generate accurate, grounded responses.

This guide is your go-to on how RAG works and how to build it properly. We’ll look at:

- A clear explanation of retrieval-augmented generation, how it connects large language models to external knowledge, and how it aids retrieval.

- How big a role RAG has in grounding generative AI and reducing AI hallucinations.

- A practical view of how a RAG pipeline forms through embeddings, vector search, APIs, and workflows.

- How semantic search, chunking, and hybrid retrieval improve relevance.

- Simple methods for evaluating RAG quality using accuracy and user trust signals.

- The most common RAG implementation failures that break AI systems at scale.

- Crucial security considerations for customer data, repositories, and internal knowledge sources.

- The importance of keeping RAG systems aligned with new data and up-to-date information.

- Where platforms like Meilisearch fit into modern RAG architectures.

- A clear direction for moving from concept to production in RAG implementation.

With this guide, you’ll learn a structured path to building reliable, scalable RAG systems.

Let’s start with what RAG is in AI.

What is RAG in AI?

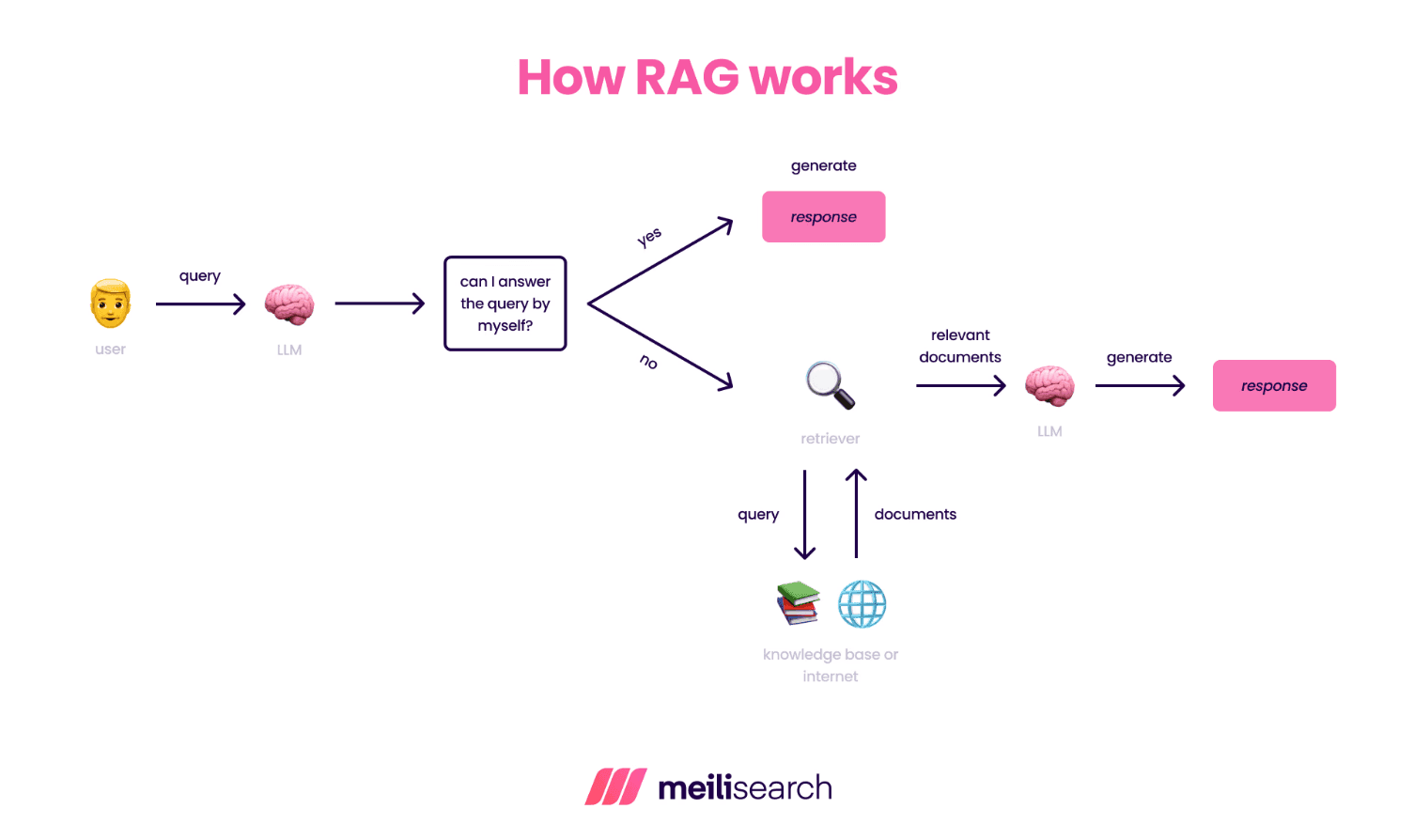

RAG in AI combines retrieval and generation in a single workflow.

Rather than relying solely on training data, RAG AI systems use external sources to retrieve critical information. Using the retrieved information, LLMs generate the most relevant response to a user query.

RAG is crucial today because it reduces AI hallucinations in results. Artificial intelligence systems can access up-to-date information rather than relying solely on static datasets used for training and retraining.

The most common example you see every day is that of adaptive RAG in a chatbot. It retrieves answers from a live knowledge base and generates a relevant response for the user.

What is retrieval-augmented generation in generative AI?

In generative AI (genAI), the RAG system provides LLMs with access to external knowledge sources before any AI-powered generation occurs.

With context derived from these sources, genAI produces a more suitable and relevant answer for the user because it isn’t relying on model memory alone (that’s CAG).

This is why RAG works well for customer support chatbots, AI assistants, and domain-specific AI applications, especially in healthcare. The AI model operates within a system, not in isolation, which reduces hallucinations and improves reliability.

A simple RAG AI workflow looks like this:

- A user asks the AI model a question

- The RAG system retrieves relevant documents from external sources

- The AI model generates an answer based on that context

- The user receives a more relevant and more accurate result

How do you build a RAG pipeline?

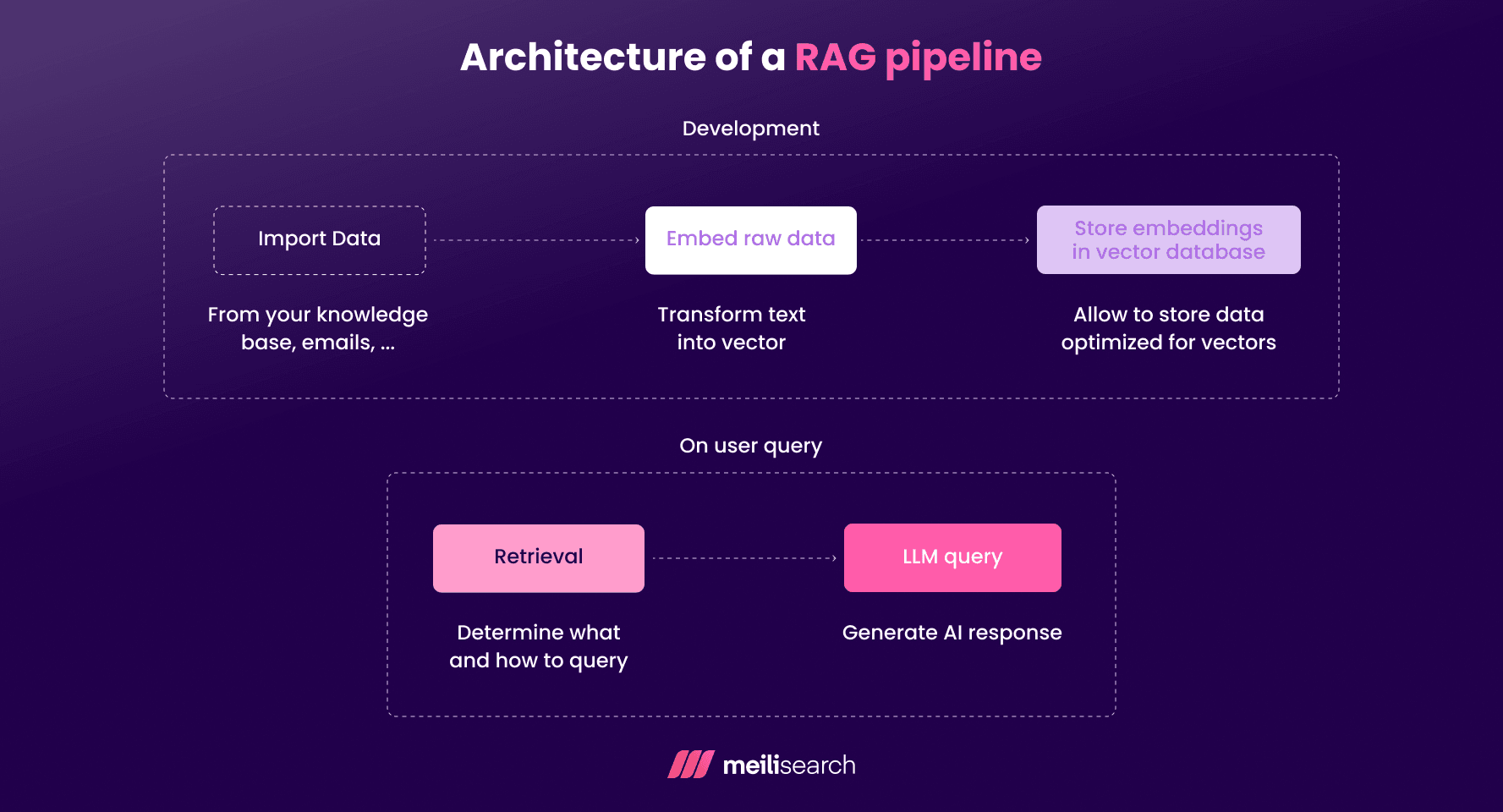

A RAG pipeline has three components in a single system: data, retrieval, and generation.

The goal of the RAG workflow is to retrieve the right information first (from real data sources) and then generate an answer based on the proper context.

Here’s what a practical build sequence looks like:

- Data ingestion: First, you connect the external data sources to the AI model.

- Chunking: Next, the content is chunked into usable units and embedded for vector- and hybrid-search.

- Indexing: The embedded chunks are stored in a vector database or search index, often with metadata to support filtering and ranking.

- Retrieval: When a user submits a query, it triggers the retrieval of relevant documents.

- Prompting and generation: The retrieved documents and context enrich the original user prompt, and the LLMs generate a relevant response.

- Evaluation: Finally, the system is evaluated against specific metrics, including relevance, latency, hallucinations, and quality.

Depending on your needs, you can add additional elements to the system, such as hybrid search, metadata filtering, ranking logic, monitoring pipelines, and continuous evaluation.

How do you improve RAG retrieval relevance?

No matter how strong your model is, if the retrieved information doesn’t match user intent, the answers will fall below expectations. As such, retrieval should have the same priority as generation, especially in high-end RAG pipelines.

Here are a few actionable ways to improve RAG retrieval relevance:

- Use hybrid search: Combine keyword and vector search to improve retrieval, as both exact matches and semantic similarity come into play.

- Apply metadata filters: These filters let you remove irrelevant data based on type, source, domain, time range, or access level metrics.

- Add reranking layers: A not-so-secret technique is to reorder retrieved documents using relevance-scoring models so that the most useful context appears first.

- Use query rewriting: Lets you expand or clarify user queries before retrieval.

- Tune chunking strategy: Adjusting chunk size and overlap is critical to ensure the retrieved content is sufficient without adding noise.

- Optimize top-k selection: Make sure that you only retrieve the most relevant documents instead of flooding the prompt with low-value data.

Now, let’s evaluate RAG model quality.

How do you evaluate RAG quality?

How well a RAG system performs depends on its three layers: retrieval, generation, and system performance.

The best combination to evaluate such a system is offline testing and live production monitoring. Let’s see below:

- Offline evaluation methods: First up, you’ll need to use benchmark queries and labeled datasets to test retrieval precision. Are the relevant documents appearing in the top-k results? Similarly, evaluate generation quality using metrics such as groundedness checks, faithfulness scoring, and answer-to-source alignment.

- Online evaluation methods: You can also assess RAG quality by tracking real user interactions and feedback. Additionally, AI hallucination rates and answer acceptance will give you a solid idea of the workflow’s efficiency.

- Retrieval metrics: Metrics such as Precision@k, Recall@k, and retrieval coverage are your best friends.

- Generation metrics: Faithfulness, groundedness, hallucination rate, citation accuracy, and answer consistency.

- System metrics: Key metrics include latency, cost per query, API response time, embedding cost, and pipeline throughput.

This whole evaluation structure reveals where failures occur and what to optimize next.

What are common RAG mistakes?

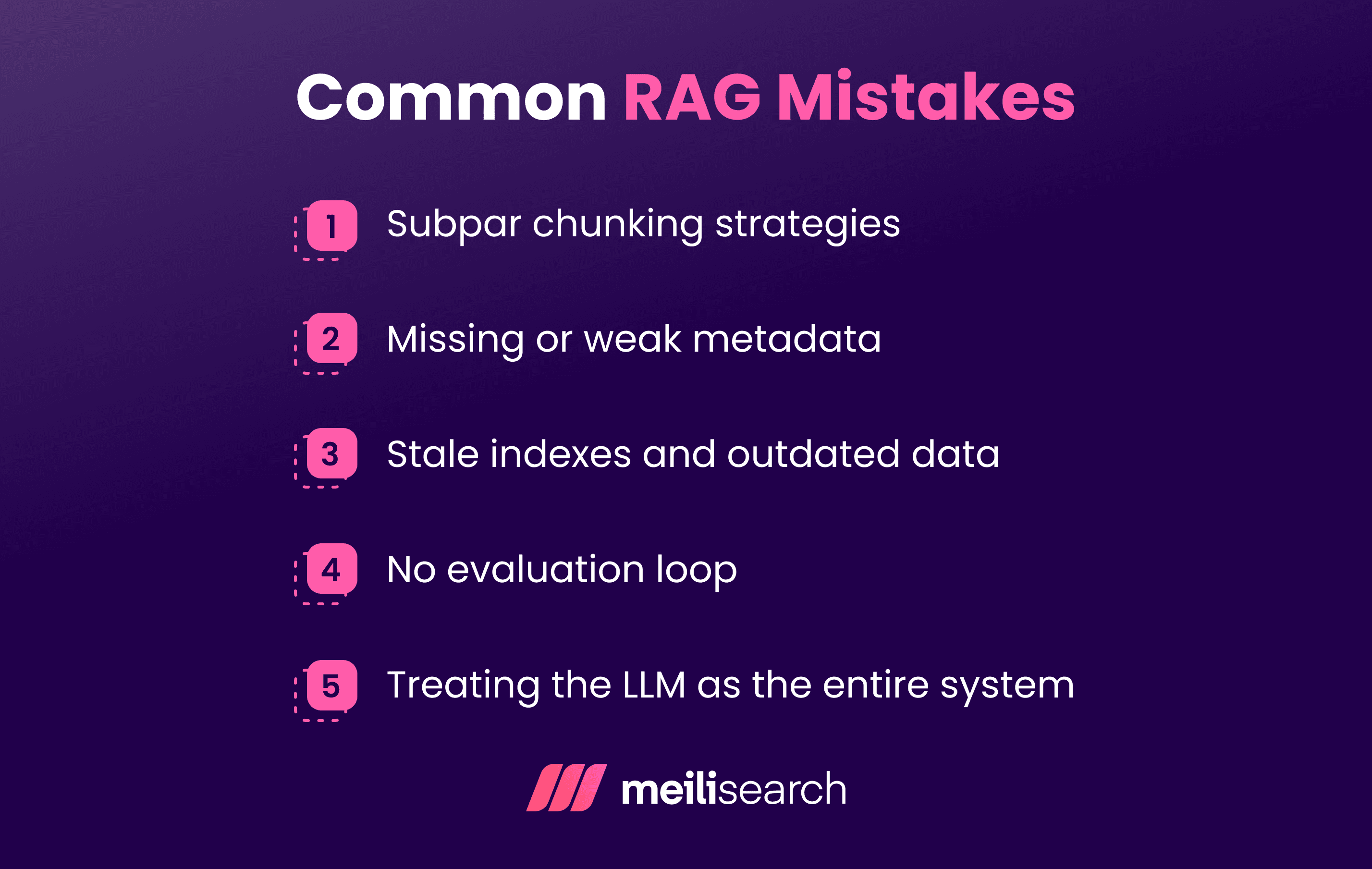

Most RAG failures stem from system design issues, not from model quality. These mistakes increase the likelihood of hallucinations and reduce trust in AI software.

Fixing them early can save you significant trouble in the future.

1. Subpar chunking strategies

Bad chunking equals fragmented answers and partial context in the results. To resolve this, adjust the chunk size and overlap. Instead of fixed splits, try semantic or recursive chunking. Each chunk should contain complete, meaningful context.

2. Missing or weak metadata

If you want to keep surfacing relevant documents, ensure the metadata is strong. Otherwise, the retrieval can pull documents from differing domains and hurt retrieval quality. Structured metadata, including source, domain, timestamps, access level, and document type, is useful here.

3. Stale indexes and outdated data

Outdated data leads to outdated search results. The simple fix is to automate reindexing, incremental updates, and refresh pipelines.

4. No evaluation loop

Without continuous monitoring, systems will degrade and answer quality will decline. To address this, focus on tracking retrieval precision alongside latency and user feedback. Try to combine offline testing with live production monitoring.

5. Treating the LLM as the entire system

The LLM cannot be treated as the entire system, nor can it be ignored. It needs to be considered an equal part of the workflow. Overreliance on the LLM will increase hallucinations, and undervaluing it will fragment responses.

How do you secure data in RAG?

To secure data in RAG, you cannot enforce controls only at the generation layer. Control at the retrieval layer is equally important, if not more so.

After all, if sensitive data is never retrieved, it can never be generated.

Security in a RAG system is improved with access control, isolation, filtering, and visibility across the entire retrieval and generation workflow.

Here's the checklist:

- Retrieval-time access control: Restrict which documents can be retrieved based on user identity, role, or permissions. Enforce filtering before retrieval, not after.

- Multi-tenant isolation: The practice of maintaining separate indexes, namespaces, or datasets for different users, teams, or organizations.

- PII handling and data classification: You must tag sensitive fields and documents using metadata. Another healthy security practice is to exclude personal data and regulated content from retrieval pipelines unless explicitly authorized.

- Secure API usage: Never expose master keys in client-side environments. Use scoped keys, role-based access, and token-based authentication for all retrieval operations.

- Audit logs and traceability: Logging helps maintain traceability between user queries, retrieval, and generation in RAG systems.

- Controlled data ingestion: Strictly control the data being ingested. You must validate sources before indexing to prevent untrusted or unverified data from entering the knowledge base.

Security in RAG is architectural. When retrieval is controlled, generation becomes safe by design.

How do you keep RAG results up to date?

RAG results can be kept up to date by controlling how data is indexed, refreshed, and re-embedded throughout the retrieval pipeline.

For large, stable datasets, such as policy archives or company documentation repositories used by AI agents, batch indexing is the preferred approach.

For older records, versioning is your best option, as it ensures they can be replaced safely. Combine this with deletion handling, and all outdated or invalid content will be removed from the indexes, reducing hallucinations from pre-trained models.

Re-embedding is also vital. It should be triggered when either the content meaning changes or when files are updated, especially when retrieval algorithms depend on semantic accuracy. Changes like structural edits or major rewrites require new embedding, while minor formatting updates don’t.

The optimal update cadence varies by data type. For instance, product data needs to be updated daily. On the other hand, static knowledge bases can be refreshed weekly or monthly. Support content and logs must be updated in near-real time, including content sourced from open-source systems.

How does Meilisearch fit into a RAG system?

Meilisearch is a great retrieval layer for a RAG system.

Here’s what a RAG flow with Meilisearch looks like:

- A user submits a query.

- Meilisearch retrieves the most relevant context.

- That context is passed to the LLM.

- The model generates a grounded response.

What makes Meilisearch powerful in RAG architectures is its handling of context quality. The hybrid search from Meilisearch combines keyword matching with vector search to unlock exact precision and semantic understanding.

This automatically prevents common failure modes in which systems retrieve unnecessary information without any actual context.

Plus, Meilisearch’s fast filtering lets teams apply metadata constraints, such as tenant isolation, document type, access level, time range, or domain boundaries, before retrieval begins. Only valid, relevant context may be included in the prompt.

From an engineering perspective, Meilisearch simplifies the entire pipeline. There is no need to stitch together separate keyword engines, vector databases, ranking layers, and filtering services. Meilisearch integrates retrieval, ranking, hybrid search, and filtering into a single system. As a result, you reduce infrastructure complexity and eliminate glue code.

It’s important to emphasize that Meilisearch does not replace the LLM. It controls the information surface on which the LLM operates. That separation of responsibility is what makes RAG systems reliable and production-ready.

What to do next with RAG in AI

RAG is not a feature but a system design approach.

The next step for RAG in AI isn’t to add more generative models like GPT, but to strengthen the retrieval quality, grounding logic, and architecture.

User trust is easily earned by teams that treat their RAG as infrastructure instead of experimentation.